|

How To Scratch Amazon Item Data & Testimonials In 2023 Tip 4 - No output in the sheet - In 'Write Data to a Google Sheet' check information action is linked. We always recommend doing a trial run - In the 'Dive Action,' established cycles to a low amount, perhaps 2-3, then click run. When the run stops, examine the Click to find out more right data remains in the Google Sheet. Maximum cycles - established the variety of loopholes the bot must execute. You examine the trend, enjoy each product testimonial's development matters, and see exactly how the prices vary. This step signs up with the Google Sheet information to the scratched information. Symphonious 2.2 Select the information you wish to scratch from a product page. Obtain the complimentary guide that will certainly show you exactly just how to utilize proxies to avoid blocks, bans, and captchas in your organization. This will certainly develop a JSON documents consisting of all the scuffed item info. You can use BeautifulSoup to pick these links and remove the href associates.

After you have entered all the keywords you want, click the "Begin" bottom to release the scrape.Assess your competition to identify what you can do better and boost your products and value proposal.You examine the trend, view each product testimonial's growth counts, and see how the rates rise and fall.Paste the URL in the tool and choose the part you want to scrape.

It is necessary to make your User-Agent look as probable as feasible. Nonetheless, to get to the item details, you will start with product listing or group pages. Up until now, we have discovered exactly how to scuff item info.

Lawful Considerations You Ought To Recognize While Scuffing AmazonSee to it your fingerprint criteria correspond, or choose Web Unblocker-- an AI-powered proxy option with dynamic fingerprinting performance. We can check out the href feature of this selector and run a loop. You would need to use the urljoin technique to parse these web links. The category web page presents the item title, product picture, product score, item rate, and, most significantly, the product ETL data validation service URLs page. If you want even more details, such as item descriptions, you will certainly get them just from the item information page. Cash-in-stock is a potential trouble for on the internet sales. Products can often go unsold for longer than anticipated, resulting in boosted supply expenses. In this situation, firms establish their products' rates less than the market competitors.People Send 20 Billion Pounds of 'Invisible' E-Waste To Landfills ... - SlashdotPeople Send 20 Billion Pounds of 'Invisible' E-Waste To Landfills .... Posted: Fri, 13 Oct 2023 03:30:00 GMT [source] What Is Amazon Scraper?This might be due to the fact that you haven't taken care of the effectiveness and speed of the algorithm. You can do some basic mathematics while designing the formula. Eliminate the query specifications from the URLs to remove identifiers linking requests with each other. Rotate the IPs with different proxy web servers if you require to. You can also release a consumer-grade VPN solution with IP rotation abilities. If you are aiming to examine all the possibilities these huge eCommerce systems have for your service-- contact us.European Telecom Groups Ask Brussels To Make Big Tech Pay ... - SlashdotEuropean Telecom Groups Ask Brussels To Make Big Tech Pay .... Posted: Mon, 02 Oct 2023 07:00:00 GMT [source]

Amazon ScraperThe method remains the same-- produce a CSS selector and utilize the select_one approach. To determine the user-agent sent out by your web browser, press F12 and open the Network tab. Select the initial demand and check out Request Headers.

0 Comments

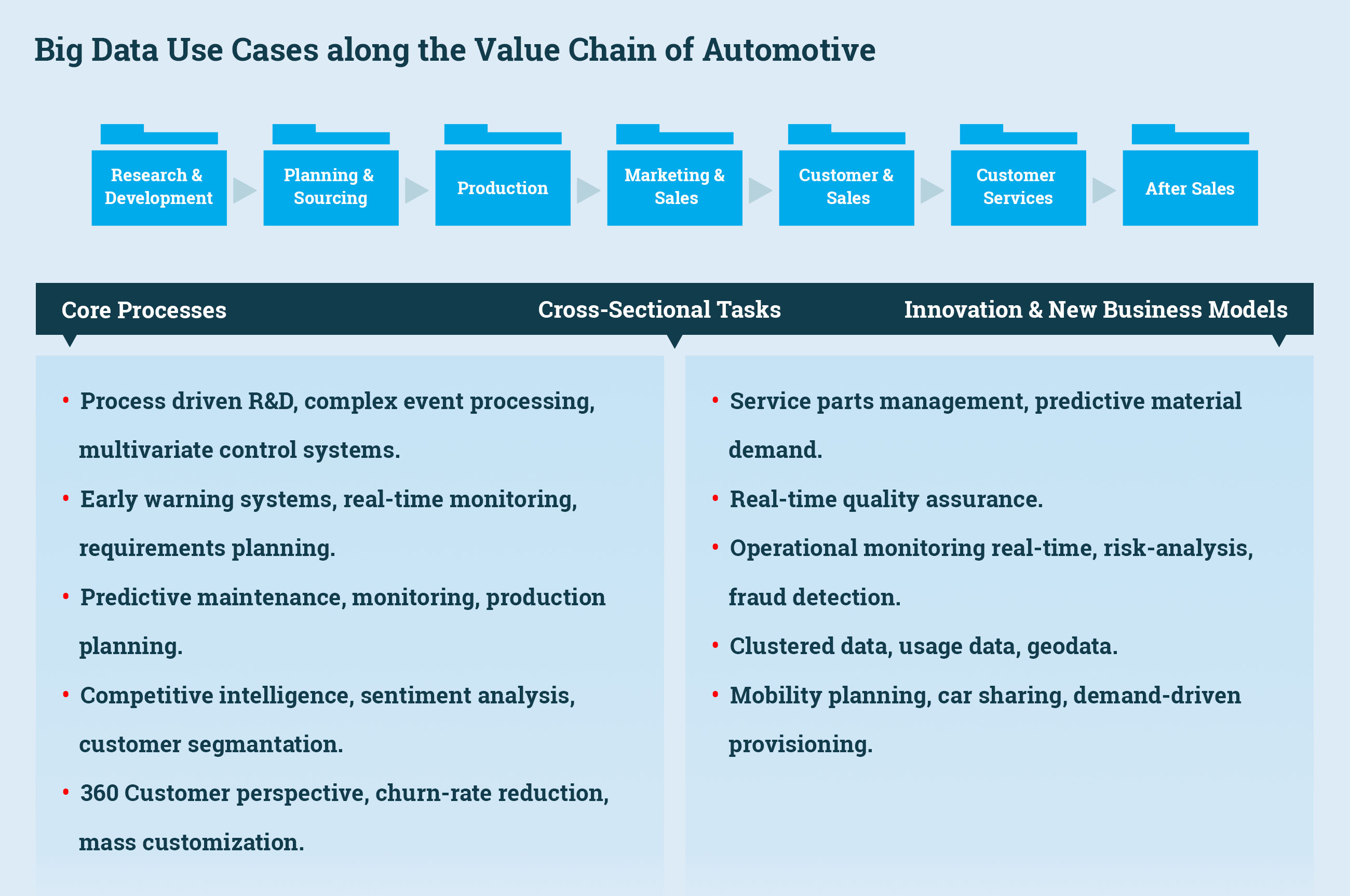

Rate Optimization In Retail: 5 Sales Boosting Instances And when we are talking about thousands of SKUs, hand-operated optimization simply does not make it. Its high accuracy implies reliable result that crucial decision-makers can deal with without a doubt. You can make use of equipment learning to your advantage since it adjusts and learns which is its largest possession. Cost optimization in retail is a rates approach based upon modern math evaluation made use of to forecast just how the demand will certainly transform in response to different prices through various networks. Therefore, there's nothing else instructions to go than up must you choose to go the cost optimization path.

Pricefx and Wipro offer Price Optimization Solutions - WiproPricefx and Wipro offer Price Optimization Solutions. Posted: Wed, 24 May 2023 10:40:28 GMT [source]

Intend To Ready Up With Rival Surveillance, Cost Tracking And Even More?SKU Trip Discover just how AI-driven rates makes the most of each SKU's capacity at every phase of its lifecycle. Automation is vital to both the rate and precision of these models. " By eliminating manual procedures, info collected supplies a much more exact picture to assist pinpoint required modification," Yarnell clarifies.Global Smart Finance Technologies Market Size to Reach USD ... - GlobeNewswireGlobal Smart Finance Technologies Market Size to Reach USD ....

Posted: Tue, 17 Oct 2023 14:06:10 GMT [source] Exactly How To Execute Price Optimization?Each of your products must go through a rate elasticity evaluation to recognize how flexible it is. Reliable and timely price optimization can take place only if you perform a rate flexibility analysis of your product and services. This indicates a complete evaluation of current practices and a full tune-up of the strategy. Coincide rivals from 2 years ago still appropriate, and are they missing out on new threats that have evolved throughout a special year of severe consumer leakage and transitioning banner and brand loyalties?

Several retailers scrape competitors' web sites for rate information and use it to set their own prices by hand or instantly, usually utilizing an approach of billing X bucks or X percent less than the lowest-price competitor.Along with this, the rate of cost components continues to progress faster and quicker, indicating sectors have had to adjust promptly to be still relevant.The sort of market price optimization explained above, using specific items to get brand-new clients, is a competitive rates model much more akin to the loss-leader method.When optimizing promotional costs, organizations can introduce customers to a new item or a package to drive sales.Besides, a rate established yesterday may no more be valid today-- highlighting the complexity of making rates choices.

A software that allows you to test 'suppose' circumstances enables you to enhance your prices better in the future. This connect the previous action due to the fact that it is about collecting information too. You require to check out client reviews, need information, client sentiment, market patterns, and supply data. This data guides you towards the essential adjustments to be made to both rates and product functions. In addition to this, you can get added details from clients with surveys or meetings.

Respond To Market Modifications QuickerThere are extra difficulties that difficulty companies and prevent their development. The problems with data hold-ups can quit organizations from making timely decisions, which may enable the competitors to take the lead and capture market share quickly. One of the most considerable alternative for organizations around the world is that it can conserve you a great deal of time for various other important tasks. Optimizing the price for the retail market is not a very easy task and needs the stakeholders to extensively study, assess and then press the costs to the market, and through software, all of this can be done quickly. MediaMarktSaturn utilizes Pricefx's real-time analytics to keep track of the effectiveness of its prices strategy.

Serverless Data Integration Amazon Internet Solutions End users typically access a unified data set via an application user interface, such as an analytics control panel, that aids them comprehend and use the information to establish workable insights. We are helping medical care organizations to integrate data from disparate resources to derive insights to support value-based treatment. Regulative requireds, growths in therapy, as well as information safety and security have enhanced the intricacies and risks that healthcare organizations are entrusted with handling the IT infrastructure. Utilizing ideal practices in ITIL approach as well as a strenuous focus on HIPAA compliance, we manage all elements of cloud environments. Next generation information combination options as well as solutions for the modern health care ventures. Empowerment to obtain the very best value of information for scientific outcomes, operational efficiency, and also much better participant experience. Any kind of third-generation system will certainly use data and machine learning to make automated or semi-automatic curation decisions. Certainly, it will certainly use innovative techniques such as T-tests, regression, predictive modeling, information clustering, and also category. Much of these strategies will require training information to set interior specifications.

Evaluate object storage advantages and disadvantages - TechTargetEvaluate object storage advantages and disadvantages.

Posted: Tue, 25 Jul 2023 07:00:00 GMT [source] How To Deliver Assimilation Adaptability And Customer Scalability At Enterprise RangeA lot more easily support different data handling structures, such as ETL and ELT, and various workloads, including batch, micro-batch, as well as streaming. Arrange an individually examination with experts who have dealt with hundreds of customers to develop winning data, analytics and AI methods. Check out just how the IBM DataOps methodology and also technique can assist you deliver a business-ready information pipeline. This quality will make data easily found, selected, as well as provisioned to any destination while lowering IT dependence, increasing analytic end results and also decreasing data prices.

An additional difficulty is the complexity of integrating diverse information styles as well as structures.Data Migration solution incorporates modern technology with ideal methods to maintain all your ongoing information migrations on track, promptly, and also on budget plan.The Databricks Lakehouse System is ideally fit to manage large amounts of streaming data.

One more obstacle is the intricacy of integrating varied data styles as well as frameworks. Traditional techniques require substantial coding and hand-operated mapping to change data right into a standardized format that can be quickly incorporated. This not just calls for considerable effort and time yet likewise boosts the risk of errors or variances in the incorporated dataset. Another significant advantage of scalable information combination strategies is their flexibility as well as flexibility. In today's dynamic company atmosphere, organizations need to be able to swiftly reply to altering information requirements as well as incorporate new data resources effortlessly.

Clean And Also Change Streaming Information In-flightIf a new client intends to keep an eye on six brand-new data resources, the structure process will certainly postpone the task by at the very least half a year. With the advent of quickly expanding cloud information stockrooms, and also the consistent increase of new opportunities, data-driven groups must develop growth-centric technology frameworks to seize momentum. Check Out exactly how IBM DataOps develops a scalable as well as dexterous data-driven society via automation, data high quality and also administration with this interactive overview. With a master data monitoring platform, Sonoma Region can connect four inconsonant information pools of 91,000 customers to offer their neighborhood much better. While using consumer data personal privacy practices as component of information administration, Lead also ended up being a digital improvement leader in its industry. A range of hundreds of users developing or running their own assimilations can just occur if a platform is very easy to make use of. Rather than a style with a human controlling the procedure with computer system help, move to an architecture with the computer system running an automated process, asking a human for assistance only when called for. Scalable AI Big Data Assimilation aids organizations to load unstructured and also structured information from any kind of source effortlessly. Production generates several information types containing semi-structured (JSON, XML, MQTT, and so on) or unstructured (video, audio, PDF, etc), which the platform pattern completely supports. By merging all these data types onto one platform, just one version of the reality exists, resulting in even more precise results. Learn just how to produce information pipes with the AWS Glue Studio visual ETL user interface. Using AWS Glue interactive sessions, information engineers can interactively explore and prepare data using the incorporated advancement setting or note pad of their selection. With typical data combination approaches, companies frequently have a hard time to manage large quantities of information and also procedure it in a timely fashion. This can cause hold-ups in accessing and also assessing important information, inevitably impacting decision-making procedures. However, as the amount of data continues to grow significantly, companies are locating it significantly difficult to scale their information combination efforts. In this article, we will explore the difficulties faced by data-driven organizations in scaling data combination https://canvas.instructure.com/eportfolios/2150873/andresqvzd722/Ideas_To_Pick_The_Right_Internet_Scraping_Service_Providers_In_Us_New_York_Dallas as well as go over some efficient remedies. One of the vital advantages of scalable information integration approaches is the ability to deal with large quantities of data. Modern cloud-based information repository infrastructure that holds substantial amount of raw, disorganized, organized data in its native layout. Makes it possible for plug-and-play information integrations Look at this website backed by sector leading venture level security. Raised functional effectiveness through a very scalable, cloud-based system for data integration, visualization, as well as analytics devices.

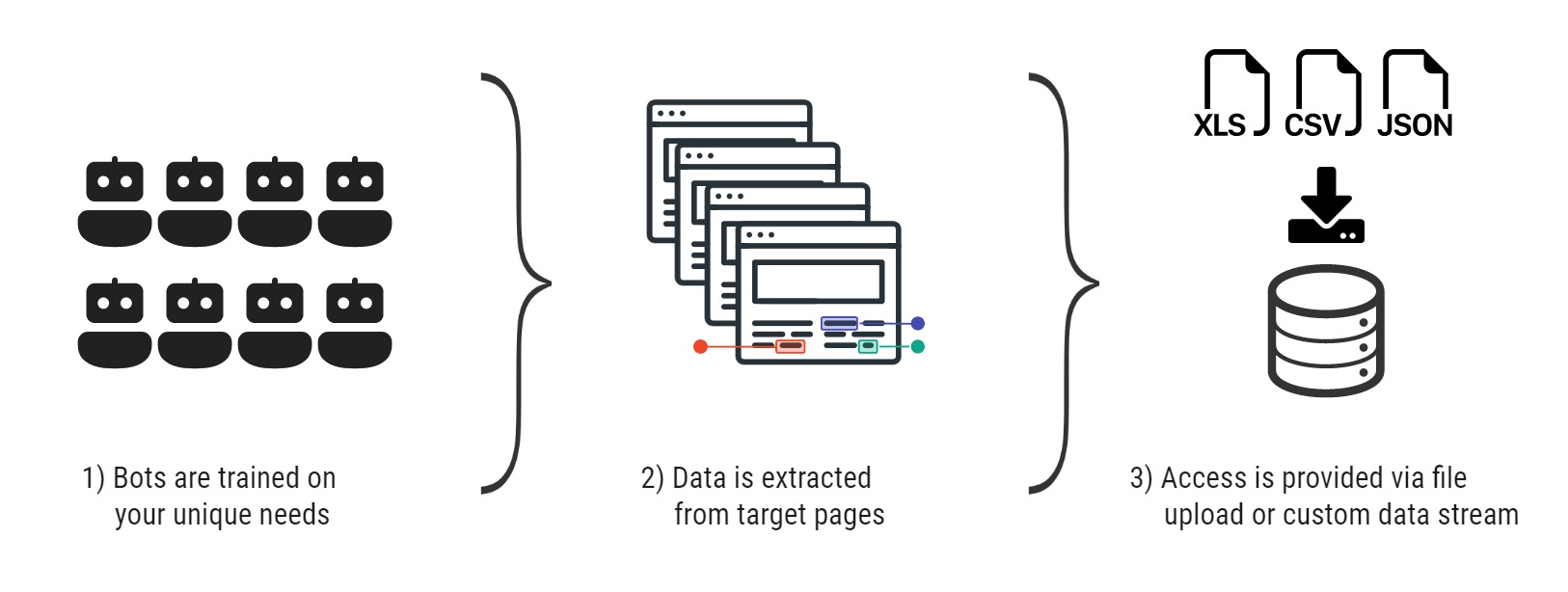

E-mail Scraping: Exactly How To Extract Email Addresses From Incoming E-mails And Accessories Primarily, a program removes data from numerous websites-- or applications or data sources-- and offers it to you in a human, understandable type. The most common approach is information being provided straight to you in a spreadsheet, ideally a CSV data. Sign up with 8,250+ customersthat have actually already trusted us to drive their service ahead. Take advantage of AI-powered technology and take your business to the following degree with our cloud-based internet scuffing service. Working with web scratching can be intimidating but dealing with a skilled internet scuffing company makes the journey much easier. An internet scratching service can help you collect and save information in a safe and secure way, supplying the required safeguards to guarantee the safety and security of your data.

Hard Truth About Web Scraping Bot Attacks and Its 4 Business Impacts - Infosecurity MagazineHard Truth About Web Scraping Bot Attacks and Its 4 Business Impacts. Posted: Tue, 31 May 2022 07:00:00 GMT [source]

Internet Scuffing Craigslist: Top 5 Craigslist Scrapers Of 2023Agenty is a SaaS platform that aids you extract information from static and AJAX internet sites, list pages, password shielded websites, and JSON and XML web APIs. This tool permits you to get in touch with people, neighborhood businesses and business in a given market. Entrepreneurs worldwide are scraping data from sites and social networks. WSaaS is your go-to service to rapidly remove beneficial information from internet sites to enable you to attain your marketing objectives. Web scratching services are a terrific way for you to stay on par with ever-changing online search engine algorithms and boost web site visibility on search engines. In addition, you can utilize web scraping services to track what influencers are claiming regarding you, your brand name or your sector. Lastly, e-mail scratching devices use a powerful solution for marketing experts wanting to enhance their e-mail advertising approaches. By leveraging these devices, you can save time and effort in constructing a quality e-mail list and target your audience more effectively. Nevertheless, it's crucial to utilize these tools responsibly, appreciating personal privacy legislations and moral guidelines. Internet scuffing tools extract all relevant information regarding your encouraging leads, including their names and e-mail IDs, from your sites and social media accounts. As a smart company owner, you understand that having a high-quality e-mail database is vital for efficient prospect outreach.

So, sign up for Mailarrow today to take advantage of your scraped email addresses and supercharge your marketing campaigns.By utilizing Hunter.io to draw out email addresses from industry-specific internet sites, 12 a.mUsing internet scraping services, businesses can successfully monitor their competitors' prices fads, stock levels and customer choices.Data removed can be made use of for price contrasts, prospecting, and threat assessment.

It is best to carry out a complete review of scratched information for accuracy and completeness. Such a review adds an extra layer of quality assurance, which is critical prior to using the information to drive advertising activities. Nevertheless, you must supplement such automated web scuffing with a hand-operated testimonial to make sure the drawn out information satisfies your top quality criteria. By analyzing such collected information, you can hone in on what locations of your product might make use of renovation, to enhance consumer interest and solidify your product-market fit.

More Articles On Advertising And Marketing ProceduresThis enables you to target details target markets and collect top quality leads. Aeroleads offers a comprehensive list building solution, including e-mail scuffing, get in touch with enrichment, and CRM integration. With Aeroleads, you can enhance your list building procedure and enhance your conversion prices. But prior to that, don't neglect to discover Mailarrow, our cold e-mail outreach software, to assist improve your advertising and marketing campaigns.Here's Why Email Marketing Is (Still) Important in 2023 - The Motley FoolHere's Why Email Marketing Is (Still) Important in 2023.

Posted: Wed, 18 May 2022 17:13:13 GMT [source] Lawful And Honest Considerations When Making Use Of E-mail Scraping ToolsOne of the channels sales groups use to reach out to their prospective customers is email advertising and marketing. A web scratching device provides you with a list of email addresses of your prospects. You intend to stay on top of the latest fads, ideal practices, and examples of effective e-mail marketing campaigns that have achieved high client retention rates. You can utilize sources like blogs, podcasts, webinars, books, and training courses to gain from the very best and get influenced for your very own email marketing campaigns.

What Is An Application Shows Interface Api? To incorporate your applications utilizing APIs, you might need to make use of application programs experts such as Soft Pull Solutions or other regional firms. An application programs interface works as a messenger for handling requests and helps your organization achieve seamless performance of your organization's systems. With APIs, you can combine the communication of data, tools, and also applications. API can also be referred to as an on-line shows user interface for your company, allowing your service applications to seamlessly interact with all backend systems.

API Security: A Tutorial - Built InAPI Security: Cost-effective custom ETL services A Tutorial. Posted: Tue, 30 May 2023 07:00:00 GMT [source]

Where Can I Find Brand-new Apis?An unconfident API subjected to the web can lead to a serious concession. APIs solved this problem by serving as a common collection of functions that can work as a translator or intermediary between various applications. They're an essential part of the existing technical revolution-- pipelines that enable a quick as well as smooth transfer of details and application performance. APIs exist to unleash the potential of a company and also at the very same time, they offer disruptive options for business seeking to leverage their network. The API enables the bulk sending and recharge of data pack plans as well as is actually beneficial for enabling international top-ups of a selection of drivers for 5.22 billion pre paid mobile phones. Certainly, APIs equip digital companies to get to new organization versions and also transform their electronic properties into brand-new earnings streams.

But to refine them, they need to accessibility servers and data sources which include customers, items, as well as supply degrees.APIs permit applications and system components to connect with each various other on interior networks in addition to online.Constructing a tightly connected, joint ecosystem of external and also interior collaborations will.With API your application or service can use the features given by an additional application without needing to understand just how that application is being implemented.

APIs make it feasible for numerous software application to converse as well as share info. Supervisor of Advertising and marketing As Supervisor of Advertising And Marketing for Technology Advisors Inc., Danine leads TAI events, campaigns, search engine optimization and web site management, webinars, user groups, as well as social networks initiatives. Her goal is to sustain TAI's mission to listen, personalize, and also remain with its clients by crafting honest, valuable, and also insightful advertising and marketing web content. Danine's passions include smash hit calamity flicks, tank tops in an array of shades, utilized book stores, Jurassic Park, and being bordered by trees. Using APIs implies that designers can obtain a lot of the software program performances they require to develop applications, without the need of building them from the ground up.

Businessapac WorkFor that reason, there requires to be an ongoing data interchange, Unlock the Value of Your Data with Custom ETL which integrates the on the internet store to the shopping cart. An API is a collection of protocols, interpretations, and also tools that allow the interaction and also interaction between software application elements. It permits a user to communicate with a web-based web device or application. With an API, customers can utilize an interface to demand something from an application. After that, the application will pass the information to an API, which will analyze the details as well as offer a response.SDK vs. API: What's the Difference? - Programming - MUO - MakeUseOfSDK vs. API: What's the Difference? - Programming. Posted: Wed, 21 Jun 2023 07:00:00 GMT [source]

What Does The Future Of Data Scientific Research Hold? The primary benefit of automated web scuffing is that it permits you to accumulate data far more quickly, successfully, and completely than you 'd have the ability to complete by hand. So the mindset of open information will most definitely influence the future of web scratching, developing more obstacles for firms to overcome. Internet data extraction could end up being a luxury that only restricted firms would be able to take pleasure in, because of greater rates. Big firms currently provide accessibility to their APIs for additional payment, and extra companies will do so in the future.

How Data Experts Overcome the Toughest Web Scraping ... - TDWIHow Data Experts Overcome the Toughest Web Scraping .... Posted: Thu, 18 May 2023 07:00:00 GMT [source]

Is Information Scuffing Lawful?You might make use of information scuffing to establish the cost of your goods and the variety of potential customers. This kind of analysis has actually constantly been the very best use of data scuffing by professionals. In addition, it offers companies a competitive advantage by allowing them to act quickly in action to adjustments in their competitors' prices strategies and make data-driven decisions.ChatGPT Can Now Browse the Internet - SlashdotChatGPT Can Now Browse the Internet.

Posted: Wed, 27 Sep 2023 07:00:00 GMT [source] Data Center ProxiesThe globe today is data-driven, and the future of information science is expanding. Also when you represent the Earth's entire populace, the ordinary person is expected to produce 1.7 megabytes of information per 2nd by the end of 2020, according to cloud supplier Domo. Therefore, the information removal room, generally, and web scraping, specifically, is expected to come to be an increasingly complex domain name, needing ever increasing degrees of specialized expertise and expertise. Get in touch with us today to read more regarding just how we can help you navigate anti-scraping procedures and extract internet data with self-confidence. In addition, firms should stand durable information recognition capabilities to make certain stringent information high quality warranties that fulfill the specific specifications of business. To stay in advance of the curve, it's important to recognize and do something about it on the most recent fads and predictions in the ever-evolving area of web data extraction and big data.

This means that while hiQ did not breach the criminal law, it breached a contract (produced by the approval of LinkedIn's Terms of Service).Also if your business has absolutely nothing to do with the internet, you'll be able to find great deals of beneficial and handy details on the web, which might aid you remain competitive.Paired with information scientist's preferred, Jupyter Notebook, Python towers over all the other languages utilized on GitHub in openly open internet scratching jobs since January 2023.Therefore, the data and our searchings for do not represent the whole Information Scientific research neighborhood.

Looking in advance, I think services worldwide will certainly come to be significantly conscientious, scrutinising the origins of IPs and their purchase methods prior to finalising contracts with service providers. In the proxy industry, it's all too common for business to just embed consent somewhere deep within their Terms & Conditions and consider their responsibility satisfied. Sadly, several domestic proxy network participants are not aware that their IP addresses are being made use of, a method which I've always located to be unsettling. Neil Emeigh, the CEO of Rayobyte, answers some vital questions regarding the transforming landscape of web scuffing and honest data procurement.

25+ Excellent Big Data Statistics For 2023 In April 2021, 38% of international companies bought wise analytics. 60% of organizations from the banking sector made use of data quantification and money making in 2020. International colocation data center market profits might enhance to greater than $58 billion by 2025. The installed base of data storage space ability in the global datasphere could reach 8.9 zettabytes by 2024. SAS has actually built up $517 million in profits from the analytic information assimilation software application market in 2019 alone.

Forecasts estimate the globe will certainly create 181 zettabytes of data by 2025.Investopedia calls for writers to utilize key sources to support their job.On the other hand, hardware will add regarding 23% of the income.Infographics are typically thoroughly crafted in a poster or discussion to convey meaning, but they disappoint supplying actual time info as they're typically taken care of in time.Nonetheless, even in the field of development, it can be tough to be effective because it's tough to discover a pain point of the customer that hasn't been dealt with yet.Money and insurance markets make use of large information and predictive analytics for scams discovery, risk analyses, credit positions, brokerage firm solutions and blockchain modern technology, to name a few uses.

This generally suggests leveraging a dispersed file system for raw data storage space. Solutions like Apache Hadoop's HDFS filesystem permit huge quantities of information to be created throughout several nodes in the cluster. This guarantees that the data can be accessed by compute resources, can be loaded right into the cluster's RAM for in-memory operations, and can gracefully deal with element failures. [newline] Other distributed filesystems can be used in place of HDFS consisting of Ceph and GlusterFS. The sheer scale of the details processed aids define huge data systems. These datasets can be orders of magnitude larger than conventional datasets, which demands much more believed at each stage of the handling and storage space life cycle. Analytics guides a lot of the choices made at Accenture, says Andrew Wilson, the working as a consultant's former CIO. With versatile data and visualization frameworks, we wish to suit multiple predispositions and make it feasible for us to take advantage of information to fit our transforming demands and queries. Welcome the ambiguous nature of large data, but supply and look for the tools to make it pertinent to you. The visual analyses of the data will certainly vary depending on your purposes and the inquiries you're aiming to address, and thus, although visual similarities will certainly exist, no two visualizations will certainly be the same. Also, a surge in the region's shopping industry is helping the big information modern technology market share growth. The need for huge information analytics is boosting amongst ventures to process information cost-effectively and promptly. Analytics solution additionally assists companies in demonstrating details in a much more advanced format for better decision-making. Trick market players are focusing on launching innovative large data options allowed with analytics abilities to improve customer experience. Apache Glow is an open-source analytics engine utilized for processing massive information collections on single-node makers or clusters. Apache Tornado has the ability to integrate with pre-existing queuing and data source technologies, and can additionally be utilized with any shows language. Multi-structured information describes a variety of data formats and kinds and can be originated from communications in between people and makers, such as web applications or socials media. An excellent instance is internet log data, which includes a combination of text and aesthetic pictures in addition to structured data like kind or transactional information.

Support Discovering: Stabilizing Expedition And ExploitationThere were 79 zettabytes of data generated worldwide in 2021. For queries related to this message please get in touch with our support group and offer the recommendation ID listed below. For example, Facebook gathers around 63 distinct pieces of information for API.Inside the AI Factory: the humans that make tech seem human - The VergeInside the AI Factory: the humans that make tech seem human.

Posted: Tue, 20 Jun 2023 07:00:00 GMT [source] The Elements Of Big DataEvery one of that allows information, too, although it might be towered over by the volume of digital data that's now growing at a rapid rate. Big information is a collection of data from typical and digital resources inside and outside your firm that represents a source for continuous exploration and evaluation. Real-time processing is regularly utilized to picture application and server metrics. The information adjustments regularly Harness the Power of Big Data through Web Scraping and large deltas in the metrics commonly show substantial influence on the wellness of the systems or company. In these instances, tasks like Prometheus can be useful for refining the data streams as a time-series data source and visualizing that information. Typical data tools aren't outfitted to manage this sort of intricacy and quantity, which has caused a slew of specialized large data software application systems and style options created to manage the load. Disorganized information comes from info that is not arranged or easily translated by conventional data sources or information designs, and typically, it's text-heavy. Metadata, Twitter tweets, and other social media sites posts are examples of unstructured data. The intake procedures normally hand the information off to the components that take care of storage space, to make sure that it can be reliably lingered to disk.What Allows Information?In April 2021, 38% of global business reported choosing to invest progressively in wise analytics approximately a moderate level. Smart analytics enable enterprises to efficiently assess substantial pieces of data and drive workable insights to enhance their decision-making. In 2020, the digital service landscape went through a makeover, with 48% Unlock Valuable Insights with Custom Web Scraping of big information and analytics leaders launching many digital makeover efforts. Records indicated that 72% of modern business are either leading or associated with digital transformation campaigns. Information sharing, ROI from data and analytics investments, and data top quality are the primary priorities.

Data Creeping Vs Information Scraping By doing this, you don't need to lose long hours that cause a bad task that consists of dealing with legal difficulties. If done properly by people who recognize what they're doing, these programs will give you the essential assistance you require to get ahead in your sector. Lots of people do not recognize the difference between data scuffing and information creeping. This confusion leads to misconceptions over what solution a firm calls for. This http://landentiiz415.cavandoragh.org/information-scratching-wikipedia process is needed for filtering and identifying different sorts of raw information from different sources into something that works and helpful. Information scratching is much more certain in what it draws out than data crawling.

For example, internet scraping commonly requires you to inspect a web site's HTML and determine the details elements which contain the data you wish to essence.This is where information scuffing services can be found in handy as the best method to get a mass amount of information in information removal formats you like.And creeping can go hand-in-hand, but each procedure has specific use cases.Some customers will place the scuffed info into a spread sheet, a database, or do further processing with an API.

Any relevant information is after that accumulated and exported to a different layout. Some users will certainly put the scratched information into a spreadsheet, a data source, or do additional handling with an API. This method can also be made use of to recognize and find target data from websites. However in the case of internet scuffing, we know exactly which web data we require to essence. For example, it could be an HTML element framework for a Boost Your Business with Professional Web Scraping details page.

How Web Scrapes FunctionIn this short article, we'll discuss the distinctions in between internet scuffing and web crawling and just how they relate to each other. We will likewise cover some use situations for both techniques and devices you can make use of. Firms that obtain made use of toscraping datasystematically, eventually get even more business leads, win a higher market share and boost their earnings. Spiders or "crawlers" are algorithmically created to follow guidelines and they operate in a similar way to Bing or Google. Information crawling service providers check via website, collect and index all the relevant information, and look for links to all the appropriate web pages.Biden Awards $7 Billion For 7 Hydrogen Hubs In Climate Fight Plan - SlashdotBiden Awards $7 Billion For 7 Hydrogen Hubs In Climate Fight Plan.

Posted: Sat, 14 Oct 2023 03:42:52 GMT [source]

Web Crawling DevicesScrapes do not need to stress over being polite or complying with any type of ethical regulations. Crawlers, however, need to see to it that they are courteous to the servers. They have to run in a manner such that they do not offend the web servers, and have to be dexterous adequate to draw out all the info needed. Generally, this details gets duplicated, and several pages end up having the exact same information. While the bots do not have any type of means of identifying this duplicate info, doing away with the same information is essential. Therefore, information de-duplication comes to be an element of web crawling.DuckDuckGo CEO Says It Takes 'Too Many Steps' To Switch From ... - SlashdotDuckDuckGo CEO Says It Takes 'Too Many Steps' To Switch From .... Posted: Thu, 21 Sep 2023 07:00:00 GMT [source]

The Future Of Web Scraping Projects: Data-driven Choice Production While companies are integrating AI and data-heavy modern technologies at a high price, they're usually met barriers in finding the talent to assist assist those campaigns. Nearly fifty percent of Find more information the CIOs in a Gartner survey said they remained in the marketplace for employees with AI abilities, yet 37 percent of those exact same respondents found such qualifications difficult to recruit. As a matter of fact, slowed down employing for AI was pointed out as the most significant obstacle to adoption in an MIT Modern Technology Testimonial and EY study. From the point of view of a proxy service provider, these are our essential techniques. Nevertheless, the intrigue intensifies when one studies the domain of internet scratching. Offered the continual updates sites make to discourage evasions, it becomes inefficient to manually manage arrangements at a large scale. ML's capacity to learn, adjust, and change configurations in real-time enables a scratching company to remain one step in advance of developing internet dynamics. Much more frameworks, libraries, and no-code options are making it much easier than ever for programmers (and non-developers!) to be able to scrape-- which had not been real eight years ago. Currently a data researcher with basic Python experience can develop a full-fledged, scalable, web scraper himself.

What Does Information Scraping Do?It's open resource, has complete support for TypeScript, and it's improved top of other preferred Node.js libraries, such as Got Scraping, Cheerio, Puppeteer, and Dramatist. It increases them with web scratching particular features like smart proxy and finger print turning, URL queues, autoscaling, data storage space and even more. When mentioning web scratching protections, we can not omit scraping information from mobile applications. In the past, mobile applications were just in some cases protected against web scuffing. Normally, there were unprotected endpoints that called for particular headers shared throughout all of the app installments.China’s Weaker Electric-Car Makers Wobble as Sales Growth Slows - BloombergChina’s Weaker Electric-Car Makers Wobble as Sales Growth Slows.

Posted: Mon, 16 Oct 2023 09:22:47 GMT [source] Making Use Of Gologincom For Facebook Internet ScuffingReverse-engineering exclusive APIs involves assessing the actions of the API to obtain an understanding of its functionality and the information it supplies, without access to its documentation or resource code. This method can be used for data scraping when public APIs are not offered, allowing users Click for info to access or else unattainable information. Web scrapers are essential tools for efficient information extraction, with Python being the best language due to its user-friendliness and effective libraries. With a lot of services now making use of data scientific research, it's no surprise that by the end of 2023, the huge data analytics market is anticipated to expand to $103 billion. The surge of anti-scraping procedures and the demand to remove information ethically and legitimately contribute to the difficulties of internet data removal.

And just a couple of months later on, the court figured out that hiQ violated LinkedIn's Regards to Solution.In the not-so-distant future, AI will dominate every sphere of human endeavor and make life less complicated.92% of information analytics professionals say their firms need to enhance their use of external data, according to MIT Sloan and Deloitte.AI and ML will certainly make Facebook web scraping more intelligent and reliable.With the surge of expert system, machine learning and large data, data extraction has actually become an essential capability for companies wanting to resource the data they require to remain competitive.

When the other website responds by granting access, the scrape crawler then parses the HTML record and transforms it right into its unique data type. These days, it's ending up being a norm and not simply a last resource for data interchange. Despite if you do not wish to make use of information scratching in your work as soon as possible. However, obtaining knowledge and understanding abilities regarding this topic is constantly a wonderful concept since it will most likely end up being more essential than ever before in the coming years.

Cloud-based Vs Local ScrapesIn The Fan, the artist matched influencers' posted Insta pictures with online video clip footage from the very same place and moment. The contrast disclosed that behind the scenes of excellent Instagram grids are frequently uninteresting and minor. After publishing regarding it on social media sites, he was promptly outlawed based on copyright cases.

Easy Information: How To Generate Income Internet Scuffing For Service Of time that would certainly have otherwise entered into hands-on study. A scraping device permits you to benefit from automatic internet scuffing without needing to set up anything or find out coding. By discovering what ranks before Octoparse, I surrender some keywords taking into consideration there may be some virtually unequalled websites. So the currently wonderful numbers are expanding at an ever-increasing price. 2.5 quintillion bytes are produced in a day with 5,053,000,000 GB Net traffic. Step 5) Select Delivery technique, Frequency, Submit type & size, and the size of the file.

It is self-evident that WebCopy functions as a data extraction device for web sites.You can describe How To Get Organic Traffic From Search Engine To Your Blogfor even more web traffic producing suggestions.With a number such as this, we can see an ever quick expanding customer group in digital marketing.This will not just result in fewer conversions however additionally you, losing all the trust fund of your internet traffic.On the Internet signifies that competitors amongst sellers will be much more strong.

Information Scrape is a Chrome plugin that allows you to scuff data from any type of HTML site online. After that you may upload them to Microsoft Excel or Google Sheets. The standard subscription option with 500 cost-free page-scrape debts per month lets you use Dataminer Scraper for nothing. Additionally, there are exceptional programs with added scuffing functions. Accumulating a lot of behavioral information is essential for online marketers that wish to add worth to their information and then use predictive analytics to get an one-upmanship. Today, a company's success depends not just on the huge data wave but additionally on its capability to improve upon existing marketing efforts and give more incorporated end results.

Use Social Media To Get To The LeadsI want to put together a listing of offshore, financial, tax obligation, bookkeeping relevant websites that do link exchange with other sites. Remove link, meta tag for internet site promo, search directory creation, web study. You must join the bandwagon of using data-scraping in your procedures before it is too late. Let us understand this process via an instance and additionally Best web scraping tools experience all the stakeholders entailed concurrently.Affiliate marketing: How publishers earned in the pandemic - Press GazetteAffiliate marketing: How publishers earned in the pandemic.

Posted: Wed, 10 Feb 2021 08:00:00 GMT [source]

Main Advantages Of Making Use Of Data Scratching For B2b MarketingIt's not just an e-mail list but includes info regarding the businesses and crucial choice makers. Recently I was seeking an url extractor program due to the fact that I am developing an internet search engine and I have tried near 20 of them, all with some missing out on features. I am just Data Scraping Experts a pupil and the typical rate is way high for me. One data set can be utilized to acquire various inferences that may impact the general earnings of the business. |

Archives

December 2023

Categories |

RSS Feed

RSS Feed